Usage Guide

Summary

This section describes usage guidance.

This section describes usage guidance.

This page is a work in progress and will be updated as we improve & finalize the content. Please check back regularly for updates.

When developing an Azure solution using AVM modules, there are several aspects to consider. This page covers important concepts and provides guidance the technical decisions. Each concept/topic referenced here will be further detailed in the corresponding Bicep or Terraform specific guidance.

Topics/concepts that are relevant and applicable for both Terraform.

Leveraging the public registries (i.e., the Bicep Public Registry or the Terraform Public Registry) is the most common and recommended approach.

This allows you to leverage the latest and greatest features of the AVM modules, as well as the latest security updates. While there aren’t any prerequisites for using the public registry - no extra software component or service needs to be installed and no configuration is needed - the client machine the deployment is initiated from will need to have access to the public registry.

A private registry - that is hosted in your own environment - can store modules originating from the public registry. Using a private registry still grants you the latest version of AVM modules while allowing you to review each version of each module before admitting them to your private registry. You also have control over who can access your own private registry. Note that using a private registry means that you’re still using each module as is, without making any changes.

Inner-sourcing AVM means maintaining your own, synchronized copy of AVM modules in your own internal private registry, repositories or other storage option. Customers normally look to inner-source AVM modules when they have strict security and compliance requirements, or when they want to publish their own lightly wrapped versions of the modules to meet their specific needs; for example changing some allowed or default values for parameter or variable inputs.

This is a more complex approach and requires more effort to maintain, but it can be beneficial in certain scenarios, however, it should not be the default approach as it can lead to a lot of overhead and maintenance and requires significant skills and resources to set up and maintain.

There are many ways to approach inner-sourcing AVM modules for both Terraform. The AVM team will be publishing guidance on this topic, based on customer experience and learnings.

You can see the AVM team talking about inner-sourcing on the AVM February 2025 community call on YouTube.

This section provides advanced guidance for developing solutions using Azure Verified Modules (AVM). It covers technical decisions and concepts that are important for building and deploying Azure solutions using AVM modules.

When implementing infrastructure in Azure leveraging IaaS and PaaS services, there are multiple options for Azure deployments. In this article we assume that a decision has been made to implement your solution, using Infrastructure-as-Code (IaC). This is best suited to allow programmatic declarative control of the target infrastructure and is ideal for projects that require repeatability and idempotency.

There are multiple language choices when implementing your solution using IaC in Azure. The Azure Verified Modules project currently supports Terraform. The following guidance summarizes considerations that can help choose the option that best suits your requirements.

Bicep is the Microsoft 1st party offering for IaC deployments. It supports Generally Available (GA) and preview features for all Azure resources and allows for modular composition of resources and solution templates. The use of simplified syntax makes IaC development intuitive and the use of the Bicep extension for VSCode provides IntelliSense and syntax validation to assist with coding. Finally, Bicep is well suited for infrastructure projects and teams that don’t require management of other cloud platforms or services outside of Azure. For a more detailed read on reasons to choose Bicep, read this article from the Bicep documentation.

HashiCorp’s Terraform is an extensible 3rd party platform that can be used across multiple cloud and on-premises platforms using multiple provider plugins. It has widespread adoption due to its simplified human-readable configuration files, common functionality, and the ability to allow a project to span multiple provider spaces.

In Azure, support is provided through two primary providers called AzureRM and AzAPI respectively. The default provider for many Azure use cases is AzureRM which is co-developed between Microsoft and HashiCorp. It includes support for generally available (GA) features, while support for new and preview features might be slightly delayed following their initial release. AzAPI is developed exclusively by Microsoft and supports all preview and GA features while being more complex to use due to the more direct interaction with Azure’s APIs. While it is possible to use both providers in a single project as needed, the best practice is to standardize on a single provider as much as is reasonable.

Projects typically choose Terraform when they bridge multiple cloud infrastructure platforms or when the development team has previous experience coding in Terraform. Modern Integrated Development Environments (IDE) - such as Visual Studio Code - include extension support for Terraform features as well as additional Azure specific extensions. These extensions enable syntax validation and highlighting as well as code formatting and HashiCorp Cloud Platform (HCP) integration for HashiCorp Cloud customers. For a more detailed read on reasons to choose Terraform, read this article from the Terraform on Azure documentation.

Before starting the process of codifying infrastructure, it is important to develop a detailed architecture of what will be created. This should include details for:

For a production grade solution, you need to

Once the architecture is agreed upon, it is time to plan the development of your IaC code. There are several key decision points that should be considered during this phase.

The two primary methods used to create your solutions module are:

The trade-off between the two options is primarily around control vs. speed. AVM works to provide the best of both options by providing modules with opinionated and recommended practice defaults while allowing for more detailed configuration as needed. In our sample exercise we’ll be using AVM modules to demonstrate building the example solution.

When using AVM modules for your solution, there is an additional choice that should be considered. The AVM library includes both pattern and resource module types. If your architecture includes or follows a well-known pattern then a pattern module may be the right option for you. If you determine this is the case, then search the module index for pattern modules in your chosen language to see if an option exists for your scenario. Otherwise, using resource modules from the library will be your best option.

In cases where an AVM resource or pattern module isn’t available for use, review the Bicep or Terraform provider documentation to identify how to augment AVM modules with standalone resources. If you feel that additional resource or pattern modules would be useful, you can also request the creation of a pattern or resource module by creating a module proposal issue on the AVM github repository.

Once the decision has been made to use AVM modules to help accelerate solution development, a decision about where those modules will be sourced from is the next key decision point. A detailed exploration of the different sourcing options can be found in the Module Sourcing section of the Concepts page. Take a moment to review the options discussed there.

For our solution we will leverage the Public Registry option by sourcing AVM modules directly from the respective Terraform and Bicep public registries. This will avoid the need to fork copies of the modules for private use.

Azure Verified Modules (AVM) for Terraform are a powerful tool that leverage the Terraform domain-specific language (DSL), industry knowledge, and an Open Source community, which altogether enable developers to quickly deploy Azure resources that follow Microsoft’s recommended practices for Azure.

In this article, we will walk through the Terraform specific considerations and recommended practices on developing your solution leveraging Azure Verified Modules. We’ll review some of the design features and trade-offs and include sample code to illustrate each discussion point.

You will need the following tools and components to complete this guide:

Before you begin, ensure you have these tools installed in your development environment.

Good module development should start with a good plan. Let’s first review the architecture and module design prior to developing our solution.

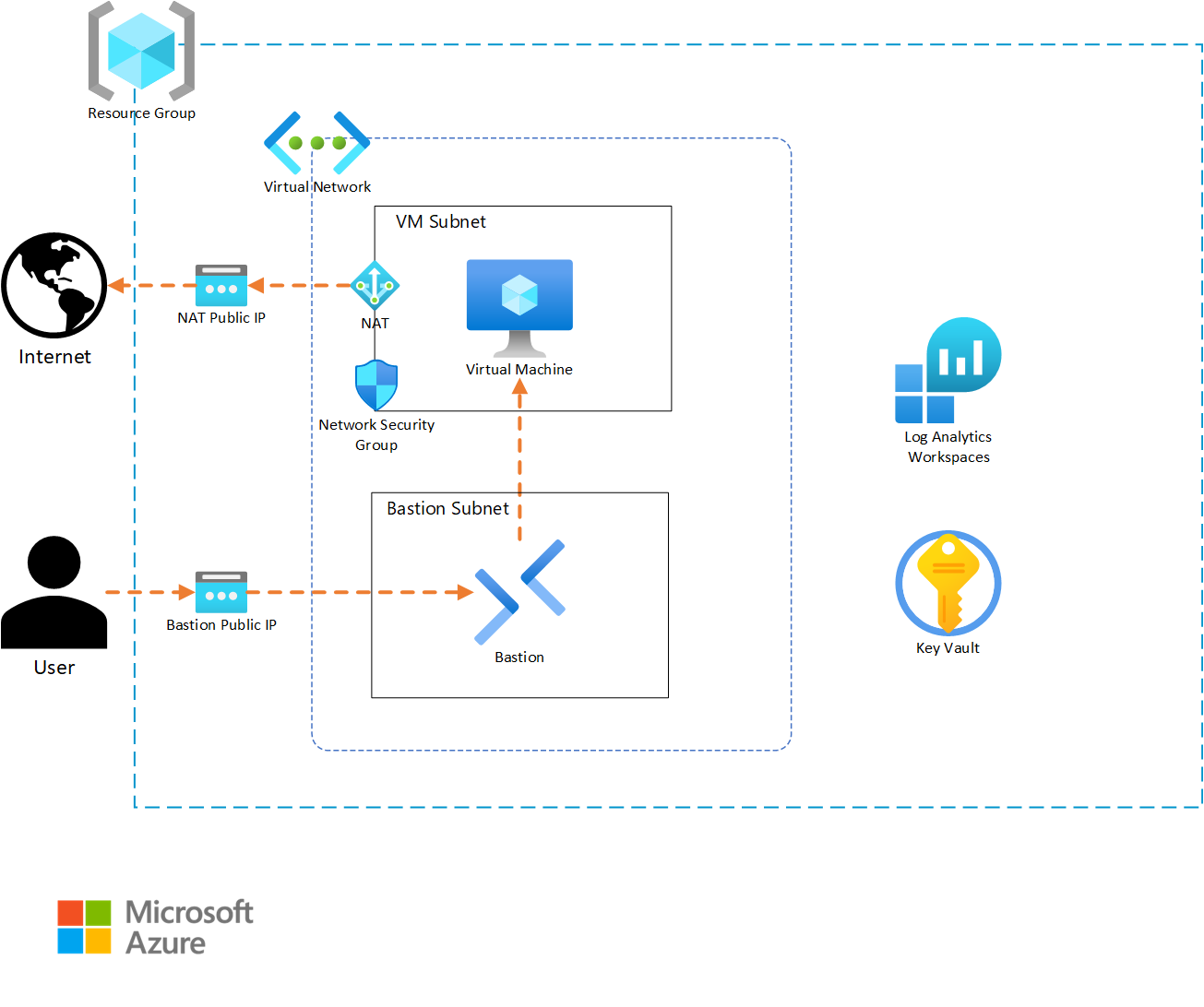

Before we begin coding, it is important to have details about what the infrastructure architecture will include. For our example, we will be building a solution that will host a simple application on a Linux virtual machine (VM).

In our design, the resource group for our solution will require appropriate tagging to comply with our corporate standards. Resources that support Diagnostic Settings must also send metric data to a Log Analytics workspace, so that the infrastructure support teams can get metric telemetry. The virtual machine will require outbound internet access to allow the application to properly function. A Key Vault will be included to store any secrets and key artifacts, and we will include a Bastion instance to allow support personnel to access the virtual machine if needed. Finally, the VM is intended to run without interaction, so we will auto-generate an SSH private key and store it in the Key Vault for the rare event of someone needing to log into the VM.

Based on this narrative, we will create the following resources:

Since our solution template (root module) is intended to be deployed multiple times, we want to develop it in a way that provides flexibility while minimizing the amount of input necessary to deploy the solution. For these reasons, we will create our module with a small set of variables that allow for deployment differentiation while still populating solution-specific defaults to minimize input. We will also separate our content into variables.tf, outputs.tf, terraform.tf, and main.tf files to simplify future maintenance.

Based on this, our file system will take the following structure:

terraform.tf - This file holds the provider definitions and versions.variables.tf - This file contains the input variable definitions and defaults.outputs.tf - This file contains the outputs and their descriptions for use by any external modules calling this root module.main.tf - This file contains the core module code for creating the solutions infrastructure.development.tfvars - This file will contain the inputs for the instance of the module that is being deployed. Content in this file will vary from instance to instance.Terraform will merge content from any file ending in a .tf extension in the module folder to create the full module content. Because of this, using different files is not required. We encourage file separation to allow for organizing code in a way that makes it easier to maintain. While the naming structure we’ve used is common, there are many other valid file naming and organization options that can be used.

In our example, we will use the following variables as inputs to allow for customization:

location - The location where our infrastructure will be deployed.name_prefix - This will be used to preface all of the resource naming.virtual_network_prefix - This will be used to ensure IP uniqueness for the deployment.tags - The custom tags to use for each deployment.Finally, we will export the following outputs:

resource_group_name - This will allow for finding this deployment if there are multiples.virtual_machine_name - This can be used to find and login to the vm if needed.Now that we’ve determined our architecture and module configurations, we need to see what AVM modules exist for use in our solution. To do this, we will open the AVM Terraform pattern module index and check if there are any existing pattern modules that match our requirement. In this case, no pattern modules fit our needs. If this was a common pattern, we could open an issue on the AVM github repository to get assistance from the AVM project to create a pattern module matching our requirements. Since our architecture isn’t common, we’ll continue to the next step.

When a pattern module fitting our needs doesn’t exist for a solution, leveraging AVM resource modules to build our own solution is the next best option. Review the AVM Terraform published resource module index for each of the resource types included in your architecture. For each AVM module, capture a link to the module to allow for a review of the documentation details on the Terraform Registry website.

Some of the published pattern modules cover multi-resource configurations that can sometimes be interpreted as a single resource. Be sure to check the pattern index for groups of resources that may be part of your architecture and that don’t exist in the resource module index. (e.g., Virtual WAN)

For our sample architecture, we have the following AVM resource modules at our disposal. Click on each module to explore its documentation on the Terraform Registry.

We can now begin coding our solution. We will create each element individually, to allow us to test our deployment as we build it out. This will also allow us to correct any bugs incrementally, so that we aren’t troubleshooting a large number of resources at the end.

Let’s begin by configuring the provider details necessary to build our solution. Since this is a root module, we want to include any provider and Terraform version constraints for this module. We’ll periodically come back and add any needed additional providers if our design includes a resource from a new provider.

Open up your development IDE (Visual studio code in our example) and create a file named terraform.tf in your root directory.

Add the following code to your terraform.tf file:

Always click on the “Copy to clipboard” button in the top right corner of the Code sample area in order not to have the line numbers included in the copied code.

This specifies that the required Terraform binary version to run your module can be any version between 1.9 and 2.0. This is a good compromise for allowing a range of binary versions while also ensuring support for any required features that are used as part of the module. This can include things like newly introduced functions or support for new key words.

Since we are developing our solution incrementally, we should validate our code. To do this, we will take the following steps:

cd and then the path to the module. As an example, if the module directory was named example we would run cd example.terraform init to initialize your provider file.You should now see a message indicating that Terraform has been successfully initialized. This indicates that our code is error free and we can continue on. If you get errors, examine the provider syntax for typos, missing quotes, or missing brackets.

Because our module is intended to be reusable, we want to provide the capability to customize each module call with those items that will differ between them. This is done by using variables to accept inputs into the module. We’ll define these inputs in a separate file named variables.tf.

Go back to the IDE, and create a file named variables.tf in the working directory.

Add the following code to your variables.tf file to configure the inputs for our example:

Note that each variable definition includes a type definition to guide module users on how to properly define an input. Also note that it is possible to set a default value. This allows module consumers to avoid setting a value if they find the default to be acceptable.

We should now test the new content we’ve created for our module. To do this, first re-run terraform init on your command line. Note that nothing has changed and the initialization completes successfully. Since we now have module content, we will attempt to run the plan as the next step of the workflow.

Type terraform plan on your command line. Note that it now asks for us to provide a value for the var.virtual_network_cidr variable. This is because we don’t provide a default value for that input so Terraform must have a valid input before it can continue. Type 10.0.0.0/22 into the input and press enter to allow the plan to complete. You should now see a message indicating that Your infrastructure matches the configuration and that no changes are needed.

There are multiple ways to provide input to the module we’re creating. We will create a tfvars file that can be supplied during plan and apply stages to minimize the need for manual input. tfvars files are a nice way to document inputs as well as allow for deploying different versions of your module. This is useful if you have a pipeline where infrastructure code is deployed first for development, and then is deployed for QA, staging, or production with different input values.

In your IDE, create a new file named development.tfvars in your working directory.

Now add the following content to your development.tfvars file.

Note that each variable has a value defined. Although, only inputs without default values are required, we include values for all of the inputs for clarity. Consider doing this in your environments so that someone looking at the tfvars files has a full picture of what values are being set.

Re-run the terraform apply, but this time, reference the .tfvars file by using the following command: terraform plan -var-file=development.tfvars. You should get a successful completion without needing to manually provide inputs.

Now that we’ve created the supporting files, we can start building the actual infrastructure code in our main file. We will add one AVM resource module at a time so that we can test each as we implement them.

Return to your IDE and create a new file named main.tf.

In Azure, we need a resource group to hold any infrastructure resources we create. This is a simple resource that typically wouldn’t require an AVM module, but we’ll include the AVM module so we can take advantage of the Role-Based Access Control (RBAC) interface if we need to restrict access to the resource group in future versions.

First, let’s visit the Terraform registry documentation page for the resource group and explore several key sections.

Provision Instructions box on the right-hand side of the page. This contains the module source and version details which allows us to copy the latest version syntax without needing to type everything ourselves.Readme tab in the middle of the page. It contains details about all required and optional inputs, resources that are created with the module, and any outputs that are defined. If you want to explore any of these items in detail, each element has a tab that you can review as needed.Examples that contains functioning examples for the AVM module. These showcase a good example of using copy/paste to bootstrap module code and then modify it for your specific purpose.Now that we’ve explored the registry content, let’s add a resource group to our module.

First, copy the content from the Provision Instructions box into our main.tf file.

On the modules documentation page, go to the inputs tab. Review the Required Inputs tab. These are the values that don’t have defaults and are the minimum required values to deploy the module. There are additional inputs in the Optional Inputs section that can be used to configure additional module functionality. Review these inputs and determine which values you would like to define in your AVM module call.

Now, replace the # insert the 2 required variables here comment with the following code to define the module inputs. Our main.tf code should look like the following:

Note how we’ve used the prefix variable and Terraform interpolation syntax to dynamically name the resource group. This allows for module customization and re-use. Also note that even though we chose to use the default module name of avm-res-resources-resourcegroup, we could modify the name of the module if needed.

After saving the file, we want to test our new content. To do this, return to the command line and first run terraform init. Notice how Terraform has downloaded the module code, as well as providers that the module requires. In this case, you can see the azurerm, random, and modtm providers were downloaded.

Let’s now deploy our resource group. First, let’s run a plan operation to review what will be created. Type terraform plan -var-file=development.tfvars and press enter to initiate the plan.

Notice that we get an error indicating that we are Missing required argument and that for the azurerm provider, we need to provide a features argument. The addition of the resource group AVM resource requires that the azurerm provider be installed to provision resources in our module. This provider requires a features block in its provider definition that is missing in our configuration.

Return to the terraform.tf file and add the following content to it. Note how the features block is currently empty. If we needed to activate any feature flags in our module, we could add them here.

Re-run terraform plan -var-file=development.tfvars now that we have updated the features block.

Note that we once again get an error. This time, the error indicates that subscription_id is a required provider property for plan/apply operations. This is a change that was introduced as part of the version 4 release of the AzureRM provider. We need to supply the ID of the deployment subscription where our resources will be created.

First, we need to get the subscription ID value. We will use the portal for this exercise, but using the Azure CLI, PowerShell, or the resource graph will also work to retrieve this value.

Subscriptions in the search field at the top middle of the page.Subscriptions from the services menu in the search drop-down.Subscription ID field on the overview page and click the copy button to copy it to the clipboard.Secondly, we need to update Terraform so that it can use the subscription ID. There are multiple ways to provide a subscription ID to the provider including adding it to the features block or using environment variables. For this scenario we’ll use environment variables to set the values so that we don’t have to re-enter them on each run. This also keeps us from storing the subscription ID in our code since it is considered a sensitive value. Select a command from the list below based on your operating system.

export ARM_SUBSCRIPTION_ID=<your ID here>set ARM_SUBSCRIPTION_ID=<your ID here>Finally, we should now be able to complete our plan operation by re-running terraform plan -var-file=development.tfvars. Note that the plan will create three resources, two for telemetry and one for the resource group.

We can complete testing by implementing the resource group. Run terraform apply -var-file="development.tfvars" and type yes and press enter when prompted to accept the changes. Terraform will create the resource group and notify you with a Apply complete message and a summary of the resources that were added, changed, and destroyed.

We can now continue by adding the Log Analytics Workspace to our main.tf file. We will follow a workflow similar to what we did with the resource group.

Provision Instructions portion of the page into the main.tf file.This time, instead of manually supplying module inputs, we will copy module content from one of the examples to minimize the amount of typing required. In most examples, the AVM module call is located at the bottom of the example.

Examples drop-down menu in the documentation and select the default example from the menu. You will see a fully functioning example code which includes the module and any supporting resources. Since we only care about the workspace resource from this example, we can scroll to the bottom of the code block and find the module "log_analytics_workspace" line.../..) for the module source value which will not work in our module call. To work around this, we copied those values from the provision instructions section of the module documentation in a previous step.The Log Analytics module content should look like the following code block. For simplicity, you can also copy this directly to avoid multiple copy/paste actions.

Again, we will need to run terraform init to allow Terraform to initialize a copy of the AVM Log Analytics module.

Now, we can deploy the Log Analytics workspace by running terraform apply -var-file="development.tfvars", typing yes and pressing enter. Note that Terraform will only create the new Log Analytics resources since the resource group already exists. This is one of the key benefits of deploying using Infrastructure as Code (IAC) tools like Terraform.

Note that we ran the terraform apply command without first running terraform plan. Because terraform apply runs a plan before prompting for the apply, we opted to shorten the instructions by skipping the explicit plan step. If you are testing in a live environment, you may want to run the plan step and save the plan as part of your governance or change control processes.

Our solution calls for a simple Key Vault implementation to store virtual machine secrets. We’ll follow the same workflow for deploying the Key Vault as we used for the previous resource group and Log Analytics workspace resources. However, since Key Vaults require data roles to manage secrets and keys, we will need to use the RBAC interface and a data resource to configure Role-Based Access Control (RBAC) during the deployment.

For this exercise, we will provision the deployment user with data rights on the Key Vault. In your environment, you will likely want to either provide additional roles as inputs or statically assign users, or groups to the Key Vault data roles. For simplicity we also set the Key Vault to have public access enabled due to us not being able to dictate a private deployment environment. In your environment where your deployment machine will be on a private network it is recommended to restrict public access for the Key Vault.

Before we implement the AVM module for the Key Vault, we want to use a data resource to read the client details about the user context of the current Terraform deployment.

Add the following line to your main.tf file and save it.

Key vaults use a global namespace which means that we will also need to add a randomization resource to allow us to randomize the name to avoid any potential name intersection issues with other Key Vault deployments. We will use Terraform’s random provider to generate the random string which we will append to the Key Vault name. Add the following code to your main module to create the random_string resource we will use for naming.

Now we can continue with adding the AVM Key Vault module to our solution.

Provision Instructions portion of the page into the main.tf file.Create secret example to fill out our module.name, location, enable_telemetry, resource_group_name, tenant_id, and role_assignments value content from the example and paste it into the new Key Vault module in your solution."${var.prefix}-kv-${random_string.name_suffix.result}"location and resource_group_name values to the same implicit resource group module references we used in the Log Analytics workspace.enable_telemetry value to true.tenant_id and role_assignments values to the same values that are in the example.Our architecture calls for us to include a diagnostic settings configuration for each resource that supports it. We’ll use the diagnostic-settings example to copy this content.

diagnostic-settings option from the examples drop-down.diagnostic_settings value and paste it into the Key Vault module block we’re building in main.tf.workspace_resource_id value to be an implicit reference to the output from the previously implemented Log Analytics module (module.avm-res-operationalinsights-workspace.resource_id in our code).Finally, we will allow public access, so that our deployer machine can add secrets to the Key Vault. If your environment doesn’t allow public access for Key Vault deployments, locate the public IP address of your deployer machine (this may be an external NAT IP for your network) and add it to the network_acls.ip_rules list value using CIDR notation.

network_acls input to null in your module block for the Key Vault.Your Key Vault module definition should now look like the following:

One of the core values of AVM is the standard configuration for interfaces across modules. The Role Assignments interface we used as part of the Key Vault deployment is a good example of this.

Continue the incremental testing of your module by running another terraform init and terraform apply -var-file="development.tfvars" sequence.

Our architecture calls for a NAT Gateway to allow virtual machines to access the internet. We will use the NAT Gateway resource_id output in future modules to link the virtual machine subnet.

Provision Instructions card from the module main page.default example excluding the subnet associations map, as we will do the association when we build the vnet.location and resource_group_nameusing implicit references from our resource group module.name_prefix variables.Review the following code to see each of these changes.

Continue the incremental testing of your module by running another terraform init and terraform apply -var-file="development.tfvars" sequence.

Our architecture calls for a Network Security Group (NSG) allowing SSH access to the virtual machine subnet. We will use the NSG AVM resource module to accomplish this task.

Provision Instructions card from the module main page.example_with_NSG_rule example.location and resource_group_nameusing implicit references from our resource group module.name_prefix variable interpolation as we did with the other modules.rule02 from the locals nsg_rules map and paste it between two curly braces to create the security_rules attribute in the NSG module we’re building."rule01" from "rule02".destination_port_ranges list to be ["22"].Upon completion the code for the NSG module should be as follows:

Continue the incremental testing of your module by running another terraform init and terraform apply -var-file="development.tfvars" sequence.

We can now continue the build-out of our architecture by configuring the virtual network (vnet) deployment. This will follow a similar pattern as the previous resource modules, but this time, we will also add some network functions to help us customize the subnet configurations.

Provision Instructions card from the module main page.complete example as a source to copy our content.resource_group_name, location, name, and address_space lines and replace their values with our deployment specific variables or module references.subnets map and duplicate the subnet0 map for each subnet.name values for each subnet so that they are unique.cidrsubnet function to dynamically generate the CIDR range for each subnet. You can explore the function documentation for more details on how it can be used.nat_gateway object on subnet0 with the resource_id output from our NAT Gateway module.network_security_group attribute to the subnet0 definition and replace the value with the resource_id output from the NSG module.After making these changes our virtual network module call code will be as follows:

Note how the Log Analytics workspace reference ends in resource_id. Each AVM module is required to export its Azure resource ID with the resource_id name to allow for consistent references.

Continue the incremental testing of your module by running another terraform init and terraform apply -var-file="development.tfvars" sequence.

We want to allow for secure remote access to the virtual machine for configuration and troubleshooting tasks. We’ll use Azure Bastion to accomplish this objective following a similar workflow to our other resources.

Provision Instructions card from the module main page.Simple Deployment example.location and resource_group_nameusing implicit references from our resource group module.name_prefix variable interpolation as we did with the other modules.subnet_id value to include an implicit reference to the bastion keyed subnet from our virtual network module.Our architecture calls for diagnostic settings to be configured on the Azure Bastion resource. In this case, there aren’t any examples that include this configuration. However, since the diagnostic settings interface is one of the standard interfaces in Azure Verified Modules, we can just copy the interface definition from our virtual network module.

diagnostic_settings value from it.diagnostic_settings value into the code for our Bastion module.The new code we added for the Bastion resource will be as follows:

Pay attention to the subnet_id syntax. In the virtual network module, the subnets are created as a sub-module allowing us to reference each of them using the map key that was defined in the subnets input. Again, we see the consistent output naming with the resource_id output for the sub-module.

Continue the incremental testing of your module by running another terraform init and terraform apply -var-file="development.tfvars" sequence.

The final step in our deployment will be our application virtual machine. We’ve had good success with our workflow so far, so we’ll use it for this step as well.

Provision Instructions card from the module main page.linux_default example.location and resource_group_nameusing implicit references from our resource group module.name_prefix variable interpolation as we did with the other modules and include the output from the random_string.name_suffix resource to add uniqueness.account_credentials.key_vault_configuration.resource_id value to reference the resource_id output from the Key Vault module.private_ip_subnet_resource_id value to an implicit reference to the subnet0 subnet output from the virtual network module.Because the default Linux example doesn’t include diagnostic settings, we need to add that content in a different way. Since the diagnostic settings interface has a standard schema, we can copy the diagnostic_settings input from our virtual network module.

diagnostic_settings map from it.diagnostic_settings content into your virtual machine module code.name value to reflect that it applies to the virtual machine.The new code we added for the virtual machine resource will be as follows:

Continue the incremental testing of your module by running another terraform init and terraform apply -var-file="development.tfvars" sequence.

The final piece of our module is to export any values that may need to be consumed by module users. From our architecture, we’ll export the resource group name and the virtual machine resource name.

outputs.tf file in your IDE.resource_group_name and set the value to an implicit reference to the resource group modules name output. Include a brief description for the output.virtual_machine_name and set the value to an implicit reference to the virtual machine module’s name output. Include a brief description for the output.The new code we added for the outputs will be as follows:

Because no new modules were created, we don’t need to run terraform init to test this change. Run terraform apply -var-file="development.tfvars" to see the new outputs that have been created.

It is a recommended practice to define the required versions of the providers for your module to ensure consistent behavior when it is being run. In this case we are going to be slightly permissive and allow increases in minor and patch versions to fluctuate, since those are not supposed to include breaking changes. In a production environment, you would likely want to pin on a specific version to guarantee behavior.

terraform init to review the providers and versions that are currently installed.terraform.tf file’s required providers field for each provider listed in the downloaded providers.The updated code we added for the providers in the terraform.tf file will be as follows:

Congratulations on successfully implementing a solution using Azure Verified Modules! You were able to build out our sample architecture using module documentation and taking advantage of features like standard interfaces and pre-defined defaults to simplify the development experience.

This was a long exercise and mistakes can happen. If you’re getting errors or a resource is incomplete and you want to see the final main.tf, expand the following code block to see the full file.

AVM modules provide several key advantages over writing raw Terraform templates:

As you continue your journey with Azure and AVM, remember that this approach can be applied to more complex architectures as well. The modular nature of AVM allows you to mix and match components to build solutions that meet your specific needs while adhering to Microsoft’s Well-Architected Framework.

By using AVM modules as building blocks, you can focus more on your solution architecture and less on the intricacies of individual resource configurations, ultimately leading to faster development cycles and more reliable deployments.

For additional learning, it can be helpful to experiment with modifying this solution. Here are some ideas you can try if you have time and would like to experiment further.

managed_identities interface to add a system assigned managed identity to the virtual machine and give it Key Vault Administrator rights on the Key Vault.tags interface to assign tags directly to one or more resources.tfvars files to match.Once you have completed this set of exercises, it is a good idea to clean up your resources to avoid incurring costs for them. This can be done typing terraform destroy -var-file=development.tfvars and entering yes when prompted.

To be covered in separate, future articles.

To make this solution enterprise-ready, you need to consider the following:

This QuickStart guide offers step-by-step instructions for integrating Azure Verified Modules (AVM) into your solutions. It includes the initial setup, essential tools, and configurations required to deploy and manage your Azure resources efficiently using AVM.

The AVM Key Vault resource module, used as an example in this chapter, simplifies the deployment and management of Azure Key Vaults, ensuring secure storage and access to your secrets, keys, and certificates.

Using AVM ensures that your infrastructure-as-code deployments follow Microsoft’s best practices and guidelines, providing a consistent and reliable foundation for your cloud solutions. AVM helps accelerate your development process, reduce the risk of misconfigurations, and enhance the security and compliance of your applications.

The default values provided by AVM are generally safe, as they follow best practices and ensure a secure and reliable setup. However, it is important to review these values to ensure they meet your specific requirements and compliance needs. Customizing the default values may be necessary to align with your organization’s policies and the specific needs of your solution.

You can find examples and detailed documentation for each AVM module in their respective code repository’s README.MD file, which details features, input parameters, and outputs. The module’s documentation also provides comprehensive usage examples, covering various scenarios and configurations. Additionally, you can explore the module’s source code repository. This information will help you understand the full capabilities of the module and how to effectively integrate it into your solutions.

This guide explains how to use an Azure Verified Modules (AVM) in your Terraform workflow. With AVM modules, you can quickly deploy and manage Azure infrastructure without writing extensive code from scratch.

In this guide, you will deploy a Key Vault resource and generate and store a key.

This article is intended for a typical ‘infra-dev’ user (cloud infrastructure professional) who is new to Azure Verified Modules and wants to learn how to deploy a module in the easiest way using AVM. The user has a basic understanding of Azure and Terraform.

For additional Terraform resources, try a tutorial on the HashiCorp website or study the detailed documentation.

You will need the following tools and components to complete this guide:

Before you begin, ensure you have these tools installed in your development environment.

In this scenario, you need to deploy a Key Vault resource and some of its child resources, such as a key. Let’s find the AVM module that will help us achieve this.

There are two primary ways for locating published Terraform Azure Verified Modules:

The easiest way to find published AVM Terraform modules is by searching the Terraform Registry. Follow these steps to locate a specific module, as shown in the video above.

It is possible to discover other unofficial modules with avm in the name using this search method. Look for the Partner tag in the module title to determine if the module is part of the official set.

Searching the Azure Verified Modules indexes is the most complete way to discover published as well as planned modules - shown as proposed. As presented in the video above, use the following steps to locate a specific module on the AVM website:

Since the Key Vault module used as an example in this guide is published as an AVM resource module, it can be found under the resource modules section in the AVM Terraform module index.

CTRL + F keyboard shortcut).Once you have identified the AVM module in the Terraform Registry you can find detailed information about the module’s functionality, components, input parameters, outputs and more. The documentation also provides comprehensive usage examples, covering various scenarios and configurations.

Explore the Key Vault module’s documentation and usage examples to understand its functionality, input variables, and outputs.

In this example, your will to deploy a secret in a new Key Vault instance without needing to provide other parameters. The AVM Key Vault resource module provides these capabilities and does so with security and reliability being core principles. The default settings of the module also apply the recommendations of the Well Architected Framework where possible and appropriate.

Note how the create-key example seems to do what you need to achieve.

Now that you have found the module details, you can use the content from the Terraform Registry to speed up your development in the following ways:

Each deployment method includes a section below so that you can choose the method which best fits your needs.

For Azure Key Vaults, the name must be globally unique. When you deploy the Key Vault, ensure you select a name that is alphanumeric, twenty-four characters or less, and unique enough to ensure no one else has used the name for their Key Vault. If the name has been used previously, you will get an error.

Leverage the following steps as a template for how to leverage examples for bootstrapping your new solution code. The Key Vault resource module is used here as an example, but in practice you may choose any module that applies to your scenario.

In your IDE - Visual Studio Code in our example - create the main.tf file for your new solution.

Paste the content from the clipboard into main.tf.

AVM examples frequently use naming and/or region selection AVM utility modules to generate deployment region and/or naming values as well as any default values for required fields. If you want to use a specific region name or other custom resource values, remove the existing region and naming module calls and replace example input values with the new desired custom input values.

Once supporting resources such as resource groups have been modified, locate the module call for the AVM module - i.e., module "keyvault".

AVM module examples use dot notation for a relative reference that is useful during module testing. However, you will need to replace the relative reference with a source reference that points to the Terraform Registry source location. In most cases, this source reference has been left as a comment in the module example to simplify replacing the existing source dot reference. Perform the following two actions to update the source:

source = "../../".# sign at the start of the commented source line - i.e., source = "Azure/avm-res-keyvault-vault/azurerm".If the module example does not include a commented Terraform Registry source reference, you will need to copy it from the module’s main documentation page. Use the following steps to do so:

source = from the code block - e.g., source = "Azure/avm-res-keyvault-vault/azurerm". Copy it onto the clipboard.source = "../../".AVM module examples use a variable to enable or disable the telemetry collection. Update the enable_telemetry input value to true or false. - e.g. enable_telemetry = true

Save your main.tf file changes and then proceed to the guide section for running your solution code.

module "avm-res-keyvault-vault" {

source = "Azure/avm-res-keyvault-vault/azurerm"

version = "0.9.1"

name = "<custom_name_here>"

resource_group_name = azurerm_resource_group.this.name

location = azurerm_resource_group.this.location

tenant_id = data.azurerm_client_config.this.tenant_id

keys = {

cmk_for_storage_account = {

key_opts = [

"decrypt",

"encrypt",

"sign",

"unwrapKey",

"verify",

"wrapKey"

]

key_type: "RSA"

name = "cmk-for-storage-account"

key_size = 2048

}

}

role_assignments = {

deployment_user_kv_admin = {

role_definition_id_or_name = "Key Vault Administrator"

principal_id = data.azurerm_client_config.current.object_id

}

}

wait_for_rbac_before_key_operations = {

create = "60s"

}

}Use the following steps as a guide for the custom implementation of an AVM Module in your solution code. This instruction path assumes that you have an existing Terraform file that you want to add the AVM module to.

name = "custom_name".After completing your solution development, you can move to the deployment stage. Follow these steps for a basic Terraform workflow:

Open the command line and login to Azure using the Azure cli

az loginIf your account has access to multiple tenants, you may need to modify the command to az login --tenant <tenant id> where “<tenant id>” is the guid for the target tenant.

After logging in, select the target subscription from the list of subscriptions that you have access to.

Change the path to the directory where your completed terraform solution files reside.

Many AVM modules depend on the AzureRM 4.0 Terraform provider which mandates that a subscription id is configured. If you receive an error indicating that subscription_id is a required provider property, you will need to set a subscription id value for the provider. For Unix based systems (Linux or MacOS) you can configure this by running export ARM_SUBSCRIPTION_ID=<your subscription guid> on the command line. On Microsoft Windows, you can perform the same operation by running set ARM_SUBSCRIPTION_ID="<your subscription guid>" from the Windows command prompt or by running $env:ARM_SUBSCRIPTION_ID="<your subscription guid>" from a powershell prompt. Replace the “<your subscription id>” notation in each command with your Azure subscription’s unique id value.

Initialize your Terraform project. This command downloads the necessary providers and modules to the working directory.

terraform initBefore applying the configuration, it is good practice to validate it to ensure there are no syntax errors.

terraform validateCreate a deployment plan. This step shows what actions Terraform will take to reach the desired state defined in your configuration.

terraform planReview the plan to ensure that only the desired actions are in the plan output.

Apply the configuration and create the resources defined in your configuration file. This command will prompt you to confirm the deployment prior to making changes. Type yes to create your solution’s infrastructure.

terraform applyIf you are confident in your changes, you can add the -auto-approve switch to bypass manual approval: terraform apply -auto-approve

Once the deployment completes, validate that the infrastructure is configured as desired.

A local terraform.tfstate file and a state backup file have been created during the deployment. The use of local state is acceptable for small temporary configurations, but production or long-lived installations should use a remote state configuration where possible. Configuring remote state is out of scope for this guide, but you can find details on using an Azure storage account for this purpose in the Microsoft Learn documentation.

When you are ready, you can remove the infrastructure deployed in this example. Use the following command to delete all resources created by your deployment:

terraform destroyMost Key Vault deployment examples activate soft-delete functionality as a default. The terraform destroy command will remove the Key Vault resource but does not purge a soft-deleted vault. You may encounter errors if you attempt to re-deploy a Key Vault with the same name during the soft-delete retention window. If you wish to purge the soft-delete for this example you can run az keyvault purge -n <keyVaultName> -l <regionName> using the Azure CLI, or Remove-AzKeyVault -VaultName "<keyVaultName>" -Location "<regionName>" -InRemovedState using Azure PowerShell.

Congratulations, you have successfully leveraged Terraform and AVM to deploy resources in Azure!

We welcome your contributions and feedback to help us improve the AVM modules and the overall experience for the community!

For developing a more advanced solution, please see the lab titled “Introduction to using Azure Verified Modules for Terraform”.

You will need the following tools and components to complete this guide: